The key problem, Manasiev stresses, is that technology for creating deepfake content is becoming increasingly accessible and sophisticated, while detection systems are not advancing at the same pace.

Author:NarativAi

Researcher, media expert, and president of NarativAI-Center for Media Innovation in the Balkans, Aleksandar Manasiev, said in an interview with RTCG Portal that the first video recordings attributed to fugitive Miloš Medenica show strong indications of being AI-generated content, with an estimated probability of around 80 percent. However, he warns that without access to the original files, it is impossible to reach an absolute conclusion.

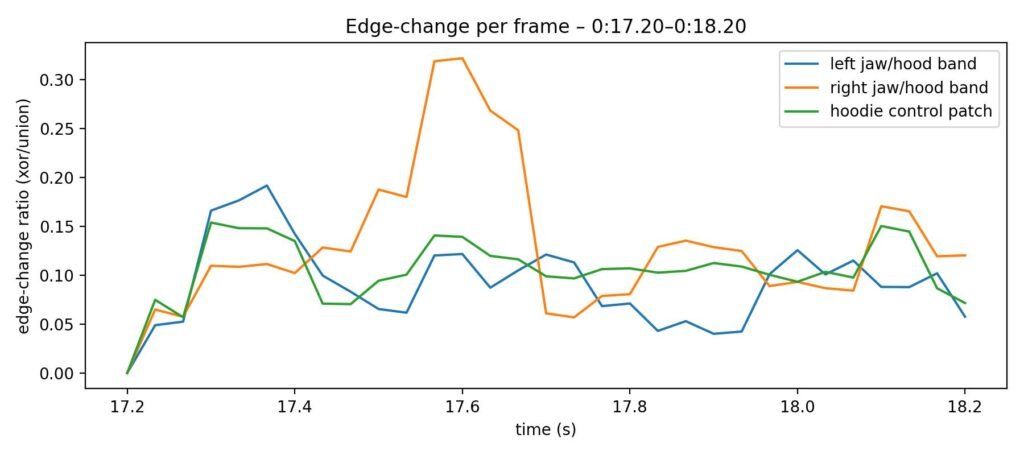

Manasiev emphasizes that when assessing authenticity, there is no single indicator that can independently confirm that content is AI-generated.

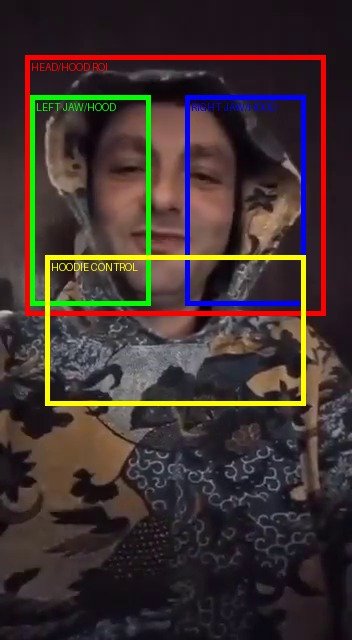

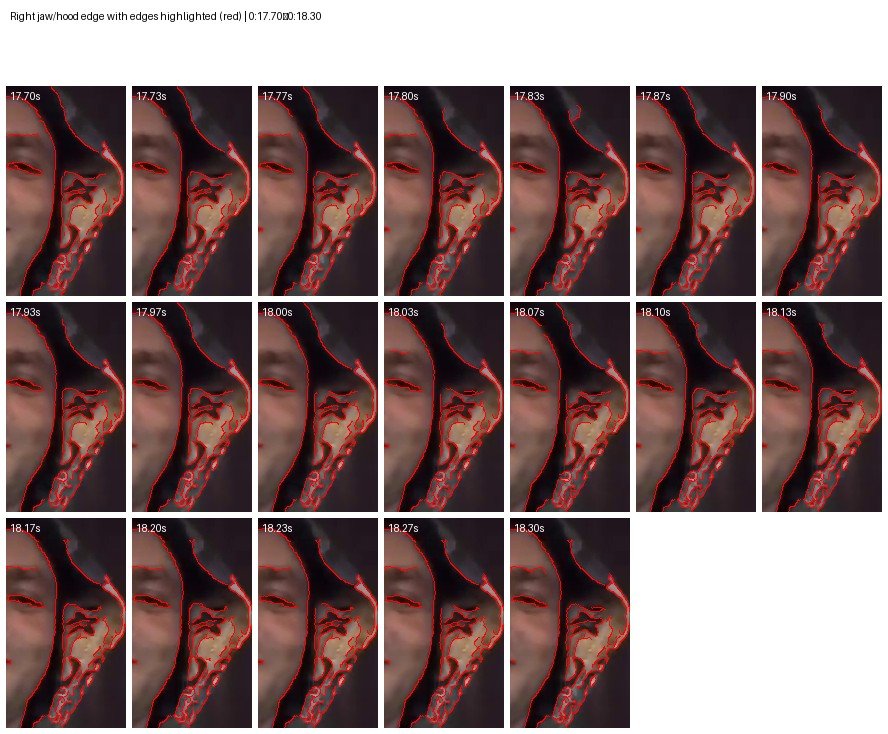

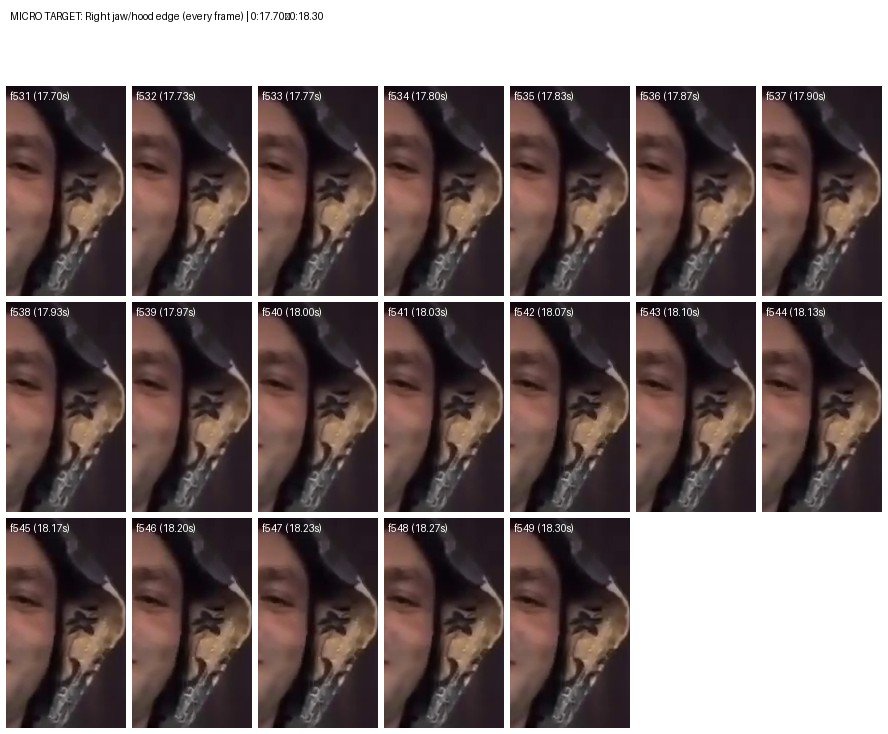

“There is no single indicator that represents proof on its own. A reliable assessment is always based on a combination of multiple elements-visual, audio, and contextual. In video analysis, we examine facial behavior in relation to the head and surroundings, lip-sync with speech, skin stability, eye and light consistency, as well as temporal coherence across multiple frames,” Manasiev explains.

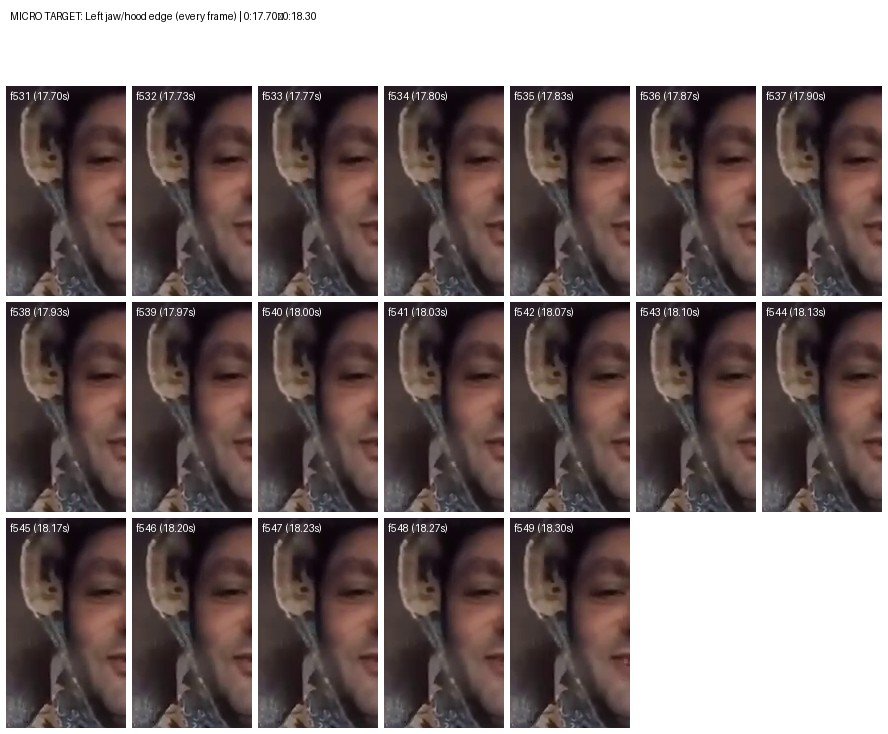

The problem, he adds, arises when videos are heavily compressed, recorded in poor lighting conditions, and repeatedly re-uploaded.

“In such cases, some forensic traces are lost or become very difficult to detect,” Manasiev notes.

Audio Even More Sensitive Than Video

According to Manasiev, the audio component is often even more vulnerable to manipulation.

As an illustration, he refers to the case of Slovak journalist Monika Tódová from Denník N, where AI-generated audio was used to create the impression of an authentic conversation.

“Modern tools can very convincingly imitate voice, intonation, and emotional tone, but they still make mistakes in subtle details-speech rhythm, pauses, breathing, and the contextual use of certain sentences. These nuances are crucial for serious analysis, yet they are not easy to detect without experience and comparative material,” says Manasiev.

He also warns of more sophisticated manipulation methods, where one person records a text in clear Montenegrin, using local dialect and natural intonation, after which that recording is used to train a model that imitates another person’s voice.

First Medenica Videos – 80% Probability of AI Manipulation

Speaking specifically about the Miloš Medenica recordings, Manasiev says that analysis of the first video clips points to strong indicators of AI-generated content.

“Dialect, rhythm, and accent already exist in the base recording, while AI is used solely to change the voice. The result can be highly convincing, but also extremely manipulative content,” Manasiev explains.

“The estimated probability is around 80 percent. In later videos, these indicators are less pronounced, but that does not prove their authenticity. On the contrary, it demonstrates how the quality of the original material, compression level, and recording conditions affect the possibility of reliable detection,” Manasiev emphasizes.

According to him, everything suggests that the creators of the videos are well aware of verification processes.

“Heavy compression and poor lighting—almost as if the videos were recorded on a ten-year-old mobile phone—may have been deliberately used to complicate the detection of potential manipulation,” says Manasiev.

The paradox, he adds, is that such material quality prevents a definitive conclusion that the videos are either authentic or fake.

“This is a typical effect of intentionally manipulated distribution methods,” Manasiev notes.

Context and Distribution Channel Are Crucial

Manasiev stresses that technical analysis alone is insufficient without considering the broader context.

“It is necessary to analyze whether the person appearing in the videos previously communicated in this way, whether they had a practice of publishing video messages or public statements and if not, why this is happening now,” he explains.

Equally important, he adds, is the distribution channel.

“In this case, the content does not appear through a previously known or directly associated channel of that person, but through an X (formerly Twitter) profile, where it is unclear who is behind the account and with what intention. This combination of an unclear source, timing, and technical limitations further increases suspicion and indicates the need for extreme caution in interpreting the content,” Manasiev says.

Technology Advances Faster Than Detection

Manasiev reminds that AI-generated photos are often easier to identify today due to anatomically illogical details or incorrect reflections, while video and audio are significantly more complex because they involve motion and temporal continuity.

“The key lies in combining technical tools with human forensic judgment. However, most advanced tools are paid and require technical expertise,” he explains.

An additional problem is that the public almost never has access to original files, but only to compressed versions from social networks, without metadata.

“When original material exists, analysis can be relatively fast and precise. When dealing with re-uploaded and compressed videos without a clear source, the process often ends with a probability estimate rather than an absolute conclusion. In such cases, we talk about percentages, not certainty,” Manasiev explains.

Live ‘Deepfake’ Use Possible—but Demanding

Regarding the use of deepfake technology in live broadcasts, Manasiev says it is theoretically possible to perform real-time face-swapping and synthetic speech generation.

However, such scenarios require serious technical infrastructure and strictly controlled recording conditions.

“In practice, low resolution, poor lighting, and a limited frame are often used to conceal technological flaws and make potential irregularities harder to detect,” Manasiev explains.

Relevant Institutions Should Respond

Manasiev believes that in such cases, relevant institutions—such as cybersecurity centers or national CERTs—should issue public statements.

“Their role is not to pass judgment, but to professionally explain what can and cannot be reliably determined, thereby reducing space for speculation and disinformation,” he says.

The key problem, Manasiev stresses, is that technology for creating deepfake content is becoming increasingly accessible and sophisticated, while detection systems are not advancing at the same pace.

“This imbalance is currently the biggest challenge—not only for journalists and researchers, but for institutions and society as a whole,” Manasiev concludes.

So far, seven videos allegedly featuring Miloš Medenica have been published on a single X profile. The Police Directorate has responded by claiming that the video is fake.

Medenica has been on the run since January 28, after the High Court in Podgorica sentenced him in a first-instance ruling to ten years and two months in prison.