Frame-by-frame review and free AI detectors highlight potential face-swap indicators in a viral clip allegedly showing fugitive Miloš Medenica speaking on camera.

Author: NarativAI

Several colleagues from Montenegro asked the NarativAI team to analyze a video circulating on Facebook that allegedly shows Miloš Medenica speaking on camera. Medenica was recently sentenced to 10 years in prison in a major corruption case and is now wanted by police after going on the run. To verify the clip, NarativAI combined a manual frame-by-frame review with AI-assisted analysis using ChatGPT (OpenAI), Google’s Gemini, and Anthropic’s Claude, then cross-checked the material using several free AI video detection tools (TruthScan, Video Detector, and Undetectable AI).

The findings were assessed against the official position of the Police Directorate of Montenegro, which stated publicly that the circulating clip is synthetically generated using AI (a “deepfake”).

In high-profile criminal cases, synthetic video can spread faster than verified information, shaping public perception and fueling misinformation. Even when footage looks “convincing,” authenticity increasingly requires structured verification—not intuition.

Below is what we found, tool by tool, using the same verification structure throughout.

What NarativAI analyzed (ChatGPT-assisted frame review)

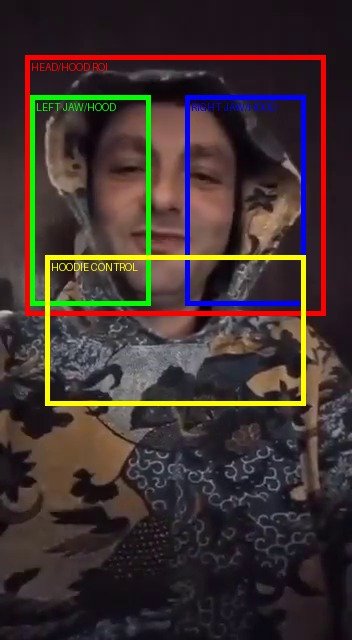

To understand how the video may have been manipulated, NarativAI conducted a targeted frame-by-frame review guided by ChatGPT, focusing on areas where deepfakes most commonly fail—especially the boundary between the face and the hood, where compositing seams often appear during speech and subtle head movement.

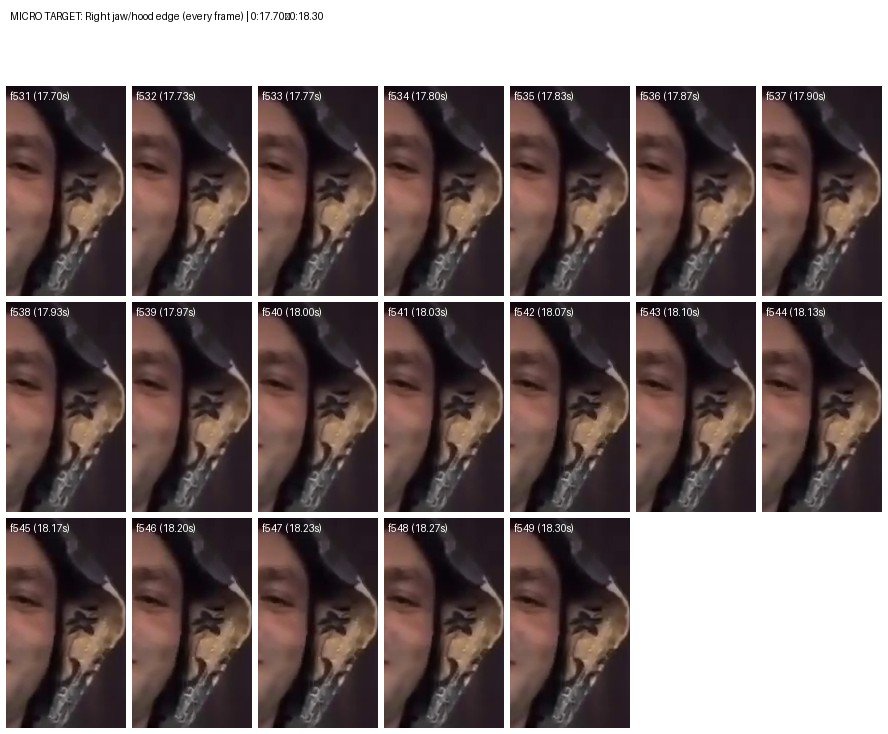

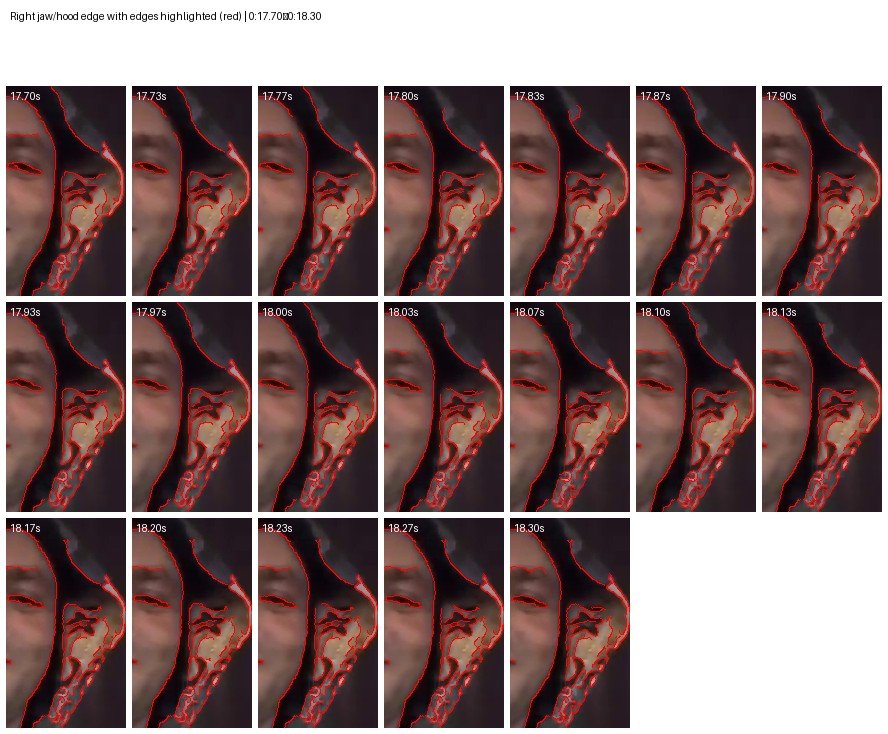

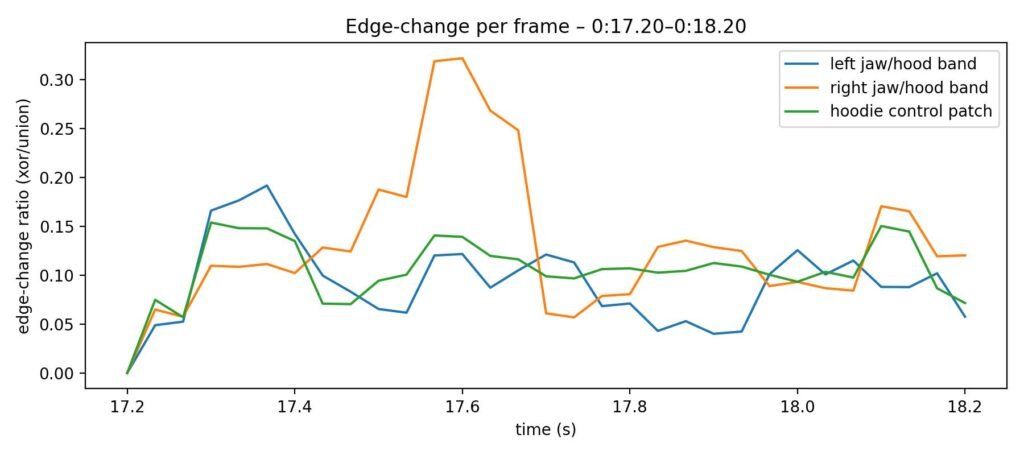

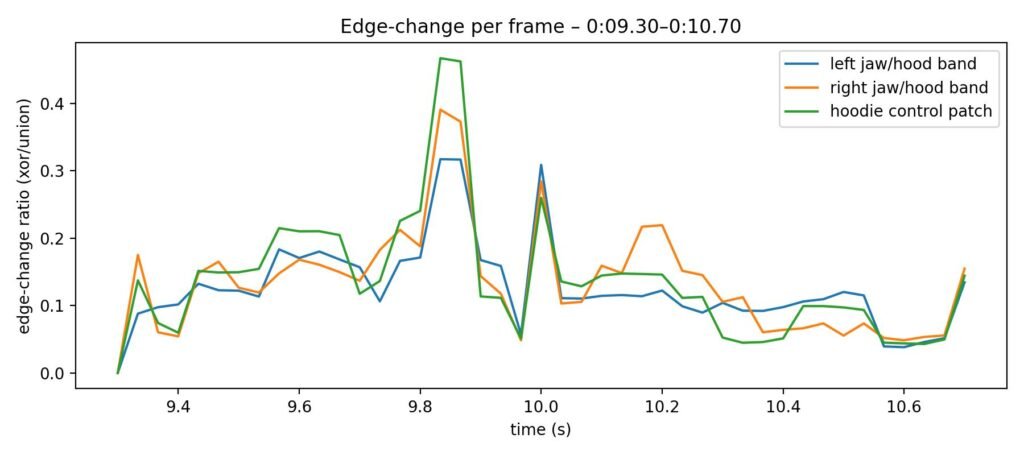

We examined multiple “stress segments” of the clip (0:03–0:06, 0:08–0:12, 0:13–0:17, and 0:16–0:20), and then micro-targeted the most motion-heavy moment for a deeper look. In the micro-analysis (0:17–0:18.30), we extracted every frame and applied edge overlays and frame-to-frame difference checks to determine whether instability was localized to the jaw/hood boundary or distributed across the entire image.

Because the circulating clip was shared on social media and likely reposted, it has undergone heavy compression and re-encoding. That can hide real manipulation clues or create false visual noise, so pixel-level indicators should be treated as supportive signals and cross-checked with additional tools and reliable confirmation.

What we found in the frames

Across most segments, the face/hood boundary appears soft and noisy—largely consistent with low resolution and heavy platform compression, which can both obscure and mimic forensic indicators. We did not observe a consistently repeating “halo” seam around the jawline or a clear occlusion error (such as the hood edge cutting unnaturally into the cheek) that would serve as a standalone visual “smoking gun.”

However, the micro-targeted sequence around ~18.20–18.27 seconds showed the most concentrated boundary flicker on the right jaw/hood edge compared with adjacent frames. This localized instability is consistent with artifacts sometimes seen in synthetic facial manipulation, though—given the extent of re-encoding typical for social platforms—it is best treated as supporting evidence, not definitive proof on its own.

The strongest “risk indicators” clustered around face-boundary behavior (jawline/hood edge), a known weak point in face-swap workflows—while other elements of the scene appeared comparatively stable.

Additional AI-assisted reviews: Gemini and Claude

A review using Google Gemini identified visual artifacts consistent with face-swap techniques. The analysis noted that the hoodie/clothing pattern appeared relatively stable, which can be consistent with a workflow where a real body/background source is used while the face is synthetically altered. Gemini flagged inconsistencies along the jawline and mask boundary where the artificial face meets the hood, and assessed that these observations align with the police statement that the clip is manipulated.

A separate review using Anthropic Claude flagged anomalies that raise questions about authenticity, focusing on:

- jaw movement during speech (the jaw appearing comparatively stationary despite mouth articulation),

- possible blending artifacts at the face/neck boundary,

- potential lighting mismatches between face and body regions,

- and skin texture appearing unusually smooth relative to the clip’s overall compression.

NarativAI notes that AI-assisted model outputs can help prioritize where to look, but their conclusions can vary significantly depending on compression, re-uploads, and input quality. These findings are best treated as corroborative, not standalone proof.

We also used free AI video detection tools

NarativAI additionally tested the video with several free, publicly available AI video detection tools. The results were mixed—which is common for heavily compressed social media clips—but they added additional signals:

- TruthScan: returned a “Mixed” result with 43% probability and low confidence.

- Video Detector: flagged “High Risk”, with a Risk Score of 58/100 and 62% confidence, stating the video shows significant signs of manipulation.

- Undetectable AI: labeled the clip “AI-Generated” with 84% confidence.

Detector scores are not verdicts. They can help triangulate risk, but they can also disagree—especially on reposted and re-encoded video.

Why the official confirmation is decisive

Visual cues and automated detectors can strengthen a verification narrative, but they share the same limitation: repeated uploads and platform re-encoding can degrade the forensic signal. In this case, the strongest confirmation remains the official position of the Police Directorate of Montenegro, which said the circulating clip is an AI-generated deepfake and that steps are being taken to identify those involved in its creation and dissemination.

What audiences should do when similar clips surface

If you’re a newsroom, document your verification steps clearly: key timestamps, frame evidence, tool outputs, and what was independently confirmed. Don’t share as fact based on appearance alone—save and verify first.

Look for primary confirmation (official statements, court/police updates) and preserve the earliest upload where possible.

(This text was written and reviewed by the editor with support from artificial intelligence tools for language editing and stylistic refinement. More on how NarativAi uses AI — Link)